Researcher in the Spotlight: Liang Li

My research concerns robust self-localization algorithms for mobile robots.

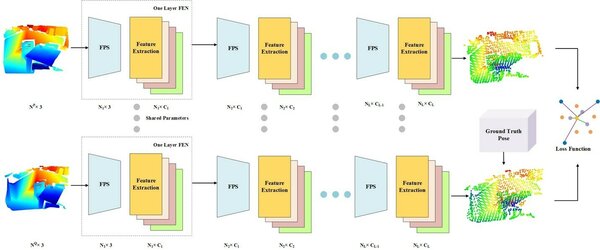

Hello, my name is Liang Li and my research concerns robust self-localization algorithms for mobile robots. Within the Mobile Perception Systems research group of the Department of Electrical Engineering, I utilize deep learning-based methods to match two input point clouds – a kind of odometry. The odometry results are then fused with robot motion data provided by inertial measurement units and wheel encoders. The deep learning-based point cloud matching is illustrated in Figure 1.

SOLVING THE SAMPLING PROBLEM

Traditional point-wise registration methods do not perform well under random initialization. Local feature-based registration algorithms have thus emerged, facilitating robust initial point-to-point correspondences. All current learning-based methods need to select local patches or interest points in advance, meaning that they are not end-to-end methods. Besides, most current methods also need to convert point clouds into another type of feature before feeding them into deep neural networks.

Our method aims to solve these problems and to generate precise registration results accordingly. We have created a direct deep learning-based point cloud registration method: DPFNet. This does not need to sample small patches from the overlapping region but can input the point cloud directly. In the many cases in which we do not have a priori knowledge or good initialization, DPFNet solves the sampling problem. We have also proposed a soft-correspondence-based loss function to deal with loose point pairs brought on by Farthest Point Sampling (FPS). Experimental results have validated the proposed method in both indoor and outdoor datasets and in terms of generalization. Our method is comparable with the state-of-the-art and outperforms it in some respects.

Achieving the best performance

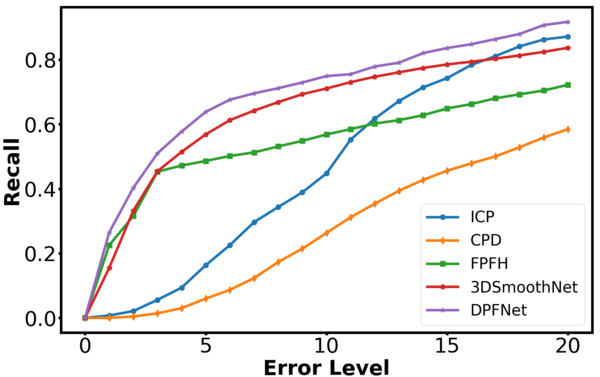

The recall curves of different methods under various thresholds are shown in Figure 2, from which we can see that DPFNet outperforms the other four in terms of recall. Though the proposed method is not the best in terms of precision, the gap between them is tiny. This indicates that precision is good across all methods so long as the point clouds are matched successfully.

Bob Hendrikx also work on localization for robots, but he uses some handcrafted features such as pillars. Our methods can serve as baselines for one another: we test our algorithms to see what the pros and cons of deep learning-based methods are when compared to handcrafted feature-based localization. The basic conclusion is that deep learning-based methods need very precise data in order to train the neural network, but the results are precise and robust. Handcrafted feature-based localization does not need precise data, but the robustness is usually not as good.

Future stability

These results will be of interest to companies or researchers who need to localize their robots robustly. For indoor robots in particular, traditional dead reckoning or odometry methods suffer from drift and accumulative errors. Our method can improve the robustness of localization substantially without being affected by frequent environmental changes. For future work, we plan to improve the point sampling method as some salient points need to be selected to ensure that sampling is stable across different point clouds.